Currently, we cannot connect to Power BI dataflows from Excel Power Query. And as we also cannot use custom connectors in there, we need a different alternative. I’m going to describe how to consume the results from Power BI dataflows as csv-files from Azure Blob Storage. I’m using the “AzureStorage.Blobs” function here, which authenticates with the key of the storage account. So just be aware that this means that you cannot apply role based security here. (Edit: A native connector that supports that is supposed to come soon: Microsoft Power Platform dataflows connector available in Excel – Power Platform Release Plan | Microsoft Docs)

General setup

Usually, when a Power BI dataflow is refreshed, a csv-file will be written to a storage in the background that holds the results of a refreshed entity. But you have the option to “bring your own Data Lake” where these files are written to instead. This then allows you to consume the csv files with the “AzureStorage.Blobs” function in Power Query for Excel instead.

Pragmatic Works have a very good tutorial on how to setup the storage in Azure and connect a new Power BI workspace to it as a prerequisite.

Details to connect to dataflows from Excel

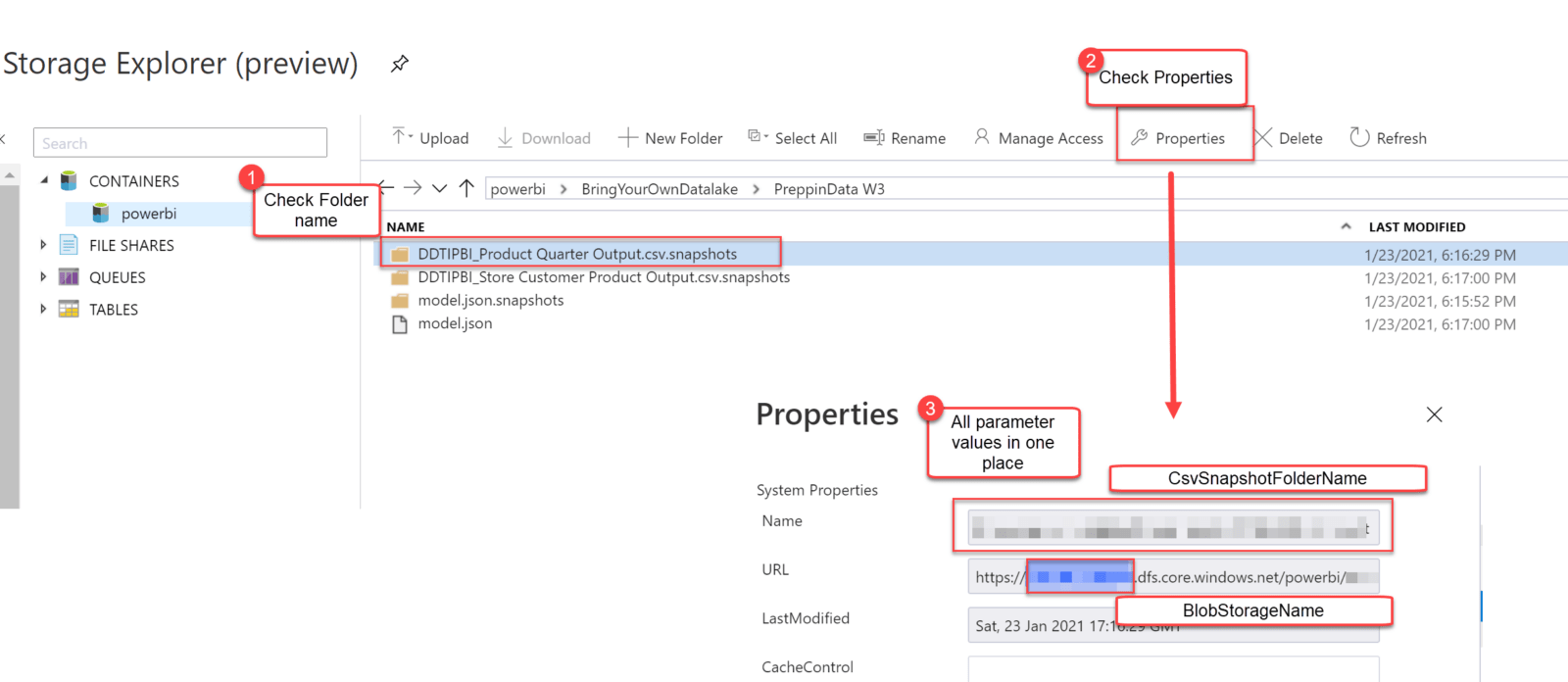

Once you’ve connected your dataflow workspace to the blob storage you can perform your first dataflow refresh. This will cause a folder for your workspace being created automatically in the blog storage. More folders will be created inside this folder. They are suffixed “.snapshot” and there will be one for the model.json files and one folder for the csv files of each entity (see picture below).

Each dataflow refresh will add csv files into the snapshot folders with the latest query results. So it’s a good idea to setup a flow or Logic app to automatically get rid of old files that you don’t need any more.

Import csv from Azure Blob Storage

To make sure to always grab the latest data from your storage, you can use my custom function below. This function will also allocate the correct column names and types from the model.json to the csv file. Just notice that complex types are not supported in dataflows, as csv files cannot represent them. Also, currently the duration type is not supported as well and will prohibit to save the dataflow. Also, only use this function in Excel. I will published a modified function for Power BI and dataflows shortly. Using this function in dataflows might even destroy your dataflow !!

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| let func = | |

| ( | |

| BlobStorageName as text, | |

| CsvSnapshotFolderName as text, | |

| optional optionalLocale as text, | |

| optional optionalDelimiter as text, | |

| optional optionalEncoding as number | |

| ) => | |

| let | |

| Locale = if optionalLocale = null then "en-US" else optionalLocale, | |

| Delimiter = if optionalDelimiter = null then "," else optionalDelimiter, | |

| Encoding = if optionalEncoding = null then 1252 else Number.From(optionalEncoding), | |

| fnTypeAsText = (TypeAsText as text) => | |

| Record.Field( | |

| [ | |

| boolean = type logical, | |

| int64 = Int64.Type, | |

| time = type time, | |

| date = type date, | |

| dateTime = type datetime, | |

| dateTimeOffset = type datetimezone, | |

| double = type number, | |

| decimal = Percentage.Type, | |

| //duration = type duration, — current bug in dataflow | |

| string = type text | |

| ], | |

| TypeAsText | |

| ), | |

| Source = AzureStorage.Blobs(BlobStorageName), | |

| powerbi1 = Source{[Name = "powerbi"]}[Data], | |

| FilterFolderName = Table.SelectRows(powerbi1, each Text.Contains([Name], CsvSnapshotFolderName)), | |

| FilterOutSnapshotExtension = Table.SelectRows( | |

| FilterFolderName, | |

| each ([Extension] <> ".snapshots") | |

| ), | |

| SortDescendingAndGrabFirst = Table.Buffer( | |

| Table.Sort(FilterOutSnapshotExtension, {{"Date modified", Order.Descending}}) | |

| ){0}, | |

| CsvContent = SortDescendingAndGrabFirst[Content], | |

| #"Imported CSV" = Csv.Document( | |

| CsvContent, | |

| [Delimiter = Delimiter, Encoding = Encoding, QuoteStyle = QuoteStyle.None] | |

| ), | |

| JsonPath = Text.BeforeDelimiter(CsvSnapshotFolderName, "/", 1), | |

| GetModelJSON = Table.SelectRows(powerbi1, each [Name] = JsonPath & "/model.json")[Content]{0}, | |

| #"Imported JSON" = Json.Document(GetModelJSON, Encoding), | |

| entities = #"Imported JSON"[entities], | |

| #"Converted to Table" = Table.FromList( | |

| entities, | |

| Splitter.SplitByNothing(), | |

| null, | |

| null, | |

| ExtraValues.Error | |

| ), | |

| #"Expanded Column1" = Table.ExpandRecordColumn( | |

| #"Converted to Table", | |

| "Column1", | |

| {"$type", "name", "description", "pbi:refreshPolicy", "attributes", "partitions"} | |

| ), | |

| FilterRelevantFile = Table.SelectRows( | |

| #"Expanded Column1", | |

| each ([name] = Text.BetweenDelimiters(CsvSnapshotFolderName, "/", ".", 1)) | |

| ), | |

| #"Removed Other Columns" = Table.SelectColumns(FilterRelevantFile, {"attributes"}), | |

| #"Expanded attributes" = Table.ExpandListColumn(#"Removed Other Columns", "attributes"), | |

| #"Expanded attributes1" = Table.ExpandRecordColumn( | |

| #"Expanded attributes", | |

| "attributes", | |

| {"name", "dataType"} | |

| ), | |

| NewColumnNames = #"Expanded attributes1"[name], | |

| RenamedColumns = Table.RenameColumns( | |

| #"Imported CSV", | |

| List.Zip({Table.ColumnNames(#"Imported CSV"), NewColumnNames}) | |

| ), | |

| #"Changed Type" = Table.TransformColumnTypes( | |

| RenamedColumns, | |

| List.Zip( | |

| { | |

| Table.ColumnNames(RenamedColumns), | |

| List.Transform(#"Expanded attributes1"[dataType], fnTypeAsText) | |

| } | |

| ), | |

| Locale | |

| ) | |

| in | |

| #"Changed Type" , | |

| documentation = [ | |

| Documentation.Name = " PowerBI.DataflowsWorkaround ", | |

| Documentation.Description = " Workaround to retrieve dataflow results in Power Query in Excel. ", | |

| Documentation.LongDescription = " Workaround to retrieve dataflow results in Power Query in Excel. ", | |

| Documentation.Category = " Accessing Data Functions ", | |

| Documentation.Source = " www.TheBIccountant.com, see: https://wp.me/p6lgsG-2n4 . ", | |

| Documentation.Version = " 1.0 ", | |

| Documentation.Author = " Imke Feldmann ", | |

| Documentation.Examples = {[Description = " ", | |

| Code = " ", | |

| Result = " "]}] | |

| in | |

| Value.ReplaceType(func, Value.ReplaceMetadata(Value.Type(func), documentation)) |

You will be prompted to authenticate to the storage account. Choose “Account key” and enter the Account key for the blob storage. You can find that in the “Access key”-section in Azure:

Just fill in the following parameters after calling the function:

- BlobStorageName: Name of your blob storage

- CsvSnapshotFolderName: You can grab this value and the previous BlobStorageName easily if you check the folder in the storage explorer and click on properties:

- optionalLocale: Default is set to “en-US”, change it if needed

- optionalDelimiter: Default encoding is set to comma, change if needed

- optionalEncoding: Default is 1252, change if needed

You need to use this function for each entity/csv file that you want to use from your dataflow.

Limitations

- As stated before, authentication is on the level of the whole blob storage.

- Using the “AzureStorage.Blobs” function to retrieve the data means that the metadata from all files in that storage account will be downloaded. That’s another reason not to use one storage account for multiple dataflows.

Summary

With a proper setup of an own Azure Blob Storage, you can access the results of Power BI dataflows in Excel. A custom function helps to efficiently grab the data and stitches the column names and types from the model.json back to the table.

Being able to consume dataflow results directly from the Azure Blob Storage opens many more use cases. However, authentication is fairly limited today. Feel free to add some votes to the ideas section in the community, like:

Enjoy & stay queryious 😉

Excellent workaround Imke. A few weeks ago I advanced that solution in my blog in Spanish to connect dataflows in power query in excel: https://powerbisp.com/consumir-dataflows-en-power-query-de-excel although I did not go into detail the code by which we could get the data from ADLS2. I used the AzureStorage.Datalake connector which is equally valid. I’m glad I was on the right track.I love your work and you are a reference in power query. Regards

Hi Francisco,

thank you and yes, that’s such a useful workaround. I’m not surprised that others came up with that as well.

/Imke

Your post helped us to build whole new Architecture, in which we are counsuming in Excel a Dataflow processed data in our daily work

Good morning Imke, I appreciate your recommendation and comments, please; in Excel get data from PowerBI dataset, Is it possible to work in Power Query with data from a pivottable? How should I do to get data from the pivottable?

Unfortunately microsoft seem to have scrubbed all mention of the excel connection that was in the timeline. This workaround may be the only option for people in the forseeable future so many thanks

Pingback: What’s New In Dataflows – April 2021 | Microsoft Power BI Blog – drawn.bigwinlong.xyz

Thanks Imke!

When will you upload a PQ function for PowerBI?

I have several entities/tables in my flow and I am struggling to put the corresponding Column Names from model.json. I wish they just had the Column Names in the csv snapshot files.

Thanks!

Michael

Is =PowerPlatform.Dataflows(null) an option now (then drill through to the specific dataflow table required), or maybe I am misunderstanding the post? Sorry if that is the case.