If you want to collect your regularly loaded data without overwriting it or create a load log that writes the load activites with a timestamp into a table, you need to create a query that adds new lines to it’s own latest version.

In both cases you add a column that returns the current day and time using DateTime.LocalNow()

Load Log the PowerBI-way

Then using Power BI it’s fairly easy: Use the R-extension to perform an append query to the external txt or csv-file like described in this post (incremental load):

require(gdata)

write.table(trim(dataset), file=”your filepath & filename.txt”, sep = “\t”, row.names = FALSE, append = TRUE, column.names=FALSE)

plot(dataset);

Load Log the Power Query-way

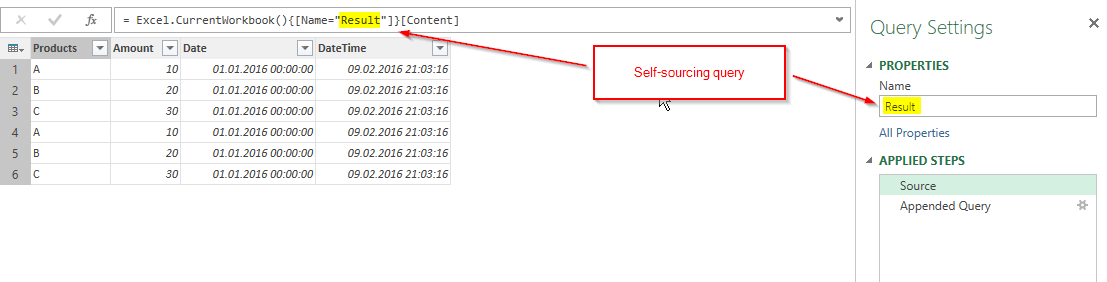

In Excel’s Power Query it’s a bit trickier, because you cannot export your queries and therefore need to create the consolidated table in your workbook itself. And it must be a self-referencing query or to be more precise: a self-sourcing query: A query that takes its latest output as its new input, then appends the new data and overwrites the old result with the new one.

How do we deal with this? When we write the query we don’t have the result yet to be referenced?

To solve this henn-&egg challenge we just take a dummy query as the first input (here: duplicate our ImportData (1)) and rename it “Result” (2). Then add the new figures (Append (3) – ImportData (4)) and delete the “Added Custom”-step (5) before loading it to a table.

With this we’ve created the table that we’re going to reference from now on. To do so we edit this query and replace the reference to the “ImportData” by the reference to our new “Result:

If you load this query now, there will be the data from your original source 3 times. My suggestion is that you delete all rows except the headers and start your import procedure fresh from here instead of manually selecting the rows that you want to keep.

Error-proofing collecting queries

If you’re not aiming for the log but for a daily collection routine, you might want to provide for unintended double-refreshes. Yes, you could delete the additional data but this is error-prone. So you reformat your “DateTime”-column from the beginning as “date” only. Then check all your columns and “Remove Duplicates”. This will only keep one load per day (provided the loads where the same).

This technique will not throw a circular-dependency-error, because it references the table in the sheet and not the query itself.

ME_PQCreateLoadHistoryV2.xlsx

Enjoy & stay queryious 🙂

Edit 25-June-2016: Just came across Hilma Buchta’s blogpost from last year that describes exactly the same technique!

Edit 19-Nov-2016: For PowerBI, you can find an improved version without the need to use R here.

Please vote for the Power BI feature request for incremental load here!

Edit 03-Jan-2017: If you struggle with applying the self-referencing-table-technique to your model, here is a much better explanation: http://exceleratorbi.com.au/cleansing-data-power-query/

Just the answer i was looking for. I can’t believe such a necessary pattern as appending data ONLY without ‘refreshing’ the existing data in the table is not a standard option in the append menu.

I needed the above for a solution to compare stock levels over time at each month end. Each month extracting with a query refresh SKU stock levels with a date stamp and power querying them into power pivot datamodel. Once in the backend they needed to stay there (and NOT be deleted by the query resfresh). And then next month append the new SKU stock levels. With some simple time intelligence this helped stock level movement reporting.

Or is there an easier pattern to use in Powerpivot / Power BI ?

So simple a solution. Thanks.

Yes … Must check if there is a feature request for it that we can vote for 🙂

Depending on your web-source sometimes you can prevent old values from disappearing by parametrizing your query-link like this:

let

Para = Date.From(DateTime.LocalNow()),

Month = Text.PadStart(Number.ToText(Date.Month(Para)-1),2,”0″),

Day = Text.PadStart(Number.ToText(Date.Day(Para)),2,”0″),

= Csv.Document(Web.Contents(“http://real-chart.finance.yahoo.com/table.csv?s=RDSB.L&a=03&b=12&c=1996&d=“&Month&”&e=“&Day&”&f=2016&g=w&ignore=.csv”), Delimiter=”,”,Encoding=1252])

This keeps the starting-value fix and automatically adjusts the end- values to the current day.

But if the old values disappear from the source or you simply want to be on the safe side, then I see no alternative at the moment.

Hi Imke! Great idea! How did I missed this post? It caused me to invent a bicycle for refresh logging…

1st query:

let

Init = #table({“LastRefresh”},{{DateTime.FixedLocalNow()}})

in

Init

2nd query (from the table renamed to “tRefresh”):

let

Source = Excel.CurrentWorkbook(){[Name=”tRefresh”]}[Content],

AddRefresh = List.Combine({Source[LastRefresh],{DateTime.FixedLocalNow()}}),

ToTable = Table.FromList(AddRefresh, Splitter.SplitByNothing(), {“LastRefresh”}, null, ExtraValues.Error)

in

ToTable

Hey Maxim, thanks for the reply 🙂

Will have a closer look at it tomorrow!

Yes, this is very much alike 🙂

The way I’ve implemented it, it now creates an additional timestamp-entry in a table every time I hit the refresh-button – like magic 🙂 So this could nicely be used for protocols or other.

Pingback: Power query source from folder - append data?

Great post – Would it be possible to post the detailed instructions for installing gdate for Power BI desktop? I’m a financial analyst, so I can’t follow all the various technical instructions.

Hi Oliver,

I’m using RStudio for my R. There you go to Tools -> Install Packages -> In the Window “Packages” you start to type “gd” and then a popup-window will open that shows the available downloads, of which one is gdata -> OK and install.

In this video you can see the whole installation-process of R incl. the load of a package: https://www.youtube.com/watch?v=S6iYIjuLSa0

Thanks – that resolved the issue!

Is it possible to write the output file to OneDrive for business or SharePoint 365?

Haven’t tried that & don’t know how to do this in R unfortunately – sorry.

Pingback: Self Referencing Tables in Power Query - Excelerator BI

getting this: error

Feedback Type:

Frown (Error)

Timestamp:

2017-06-27T20:02:53.3731924Z

Local Time:

2017-06-27T16:02:53.3731924-04:00

Product Version:

2.46.4732.581 (PBIDesktop) (x64)

Release:

May 2017

IE Version:

11.1358.14393.0

OS Version:

Microsoft Windows NT 10.0.14393.0 (x64 en-US)

CLR Version:

4.6.2. or later [Release Number = 394802]

Workbook Package Info:

1* – en-US, Query Groups: 0, fastCombine: Disabled, runBackgroundAnalysis: True.

Peak Working Set:

498 MB

Private Memory:

429 MB

Peak Virtual Memory:

34.2 GB

Error Message:

R script error.

Loading required package: gdata

gdata: Unable to locate valid perl interpreter

gdata:

gdata: read.xls() will be unable to read Excel XLS and XLSX files

gdata: unless the ‘perl=’ argument is used to specify the location of a

gdata: valid perl intrpreter.

gdata:

gdata: (To avoid display of this message in the future, please ensure

gdata: perl is installed and available on the executable search path.)

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLX’ (Excel 97-2004) files.

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLSX’ (Excel 2007+) files.

gdata: Run the function ‘installXLSXsupport()’

gdata: to automatically download and install the perl

gdata: libaries needed to support Excel XLS and XLSX formats.

Attaching package: ‘gdata’

The following object is masked from ‘package:stats’:

nobs

The following object is masked from ‘package:utils’:

object.size

The following object is masked from ‘package:base’:

startsWith

Error: unexpected input in “write.table(trim(dataset), file=””

Execution halted

User ID:

820dea21-fab4-4831-a846-cf7d8390c450

Session ID:

5308cac0-adbd-4d00-a4e7-95b4d45b66e1

Telemetry Enabled:

True

Model Default Mode:

Import

Enabled Preview Features:

PBI_PbiServiceLiveConnect

PBI_daxTemplatesEnabled

PBI_relativeDateSlicer

Disabled Preview Features:

PBI_Impala

PBI_Snowflake

PBI_shapeMapVisualEnabled

PBI_EnableReportTheme

PBI_allowBiDiCrossFilterInDirectQuery

PBI_esriEnabled

PBI_pivotTableVisualEnabled

PBI_numericSlicerEnabled

PBI_SpanishLinguisticsEnabled

Disabled DirectQuery Options:

PBI_DirectQuery_Unrestricted

Cloud:

GlobalCloud

Activity ID:

5308cac0-adbd-4d00-a4e7-95b4d45b66e1

Time:

Tue Jun 27 2017 16:02:43 GMT-0400 (Eastern Daylight Time)

Version:

2.46.4732.581 (PBIDesktop)

Client Error Code:

ServiceErrorToClientError

Error Details:

R script error.

Loading required package: gdata

gdata: Unable to locate valid perl interpreter

gdata:

gdata: read.xls() will be unable to read Excel XLS and XLSX files

gdata: unless the ‘perl=’ argument is used to specify the location of a

gdata: valid perl intrpreter.

gdata:

gdata: (To avoid display of this message in the future, please ensure

gdata: perl is installed and available on the executable search path.)

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLX’ (Excel 97-2004) files.

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLSX’ (Excel 2007+) files.

gdata: Run the function ‘installXLSXsupport()’

gdata: to automatically download and install the perl

gdata: libaries needed to support Excel XLS and XLSX formats.

Attaching package: ‘gdata’

The following object is masked from ‘package:stats’:

nobs

The following object is masked from ‘package:utils’:

object.size

The following object is masked from ‘package:base’:

startsWith

Error: unexpected input in “write.table(trim(dataset), file=””

Execution halted

Stack Trace:

Microsoft.PowerBI.ExploreServiceCommon.ScriptHandlerException: R script error.

Loading required package: gdata

gdata: Unable to locate valid perl interpreter

gdata:

gdata: read.xls() will be unable to read Excel XLS and XLSX files

gdata: unless the ‘perl=’ argument is used to specify the location of a

gdata: valid perl intrpreter.

gdata:

gdata: (To avoid display of this message in the future, please ensure

gdata: perl is installed and available on the executable search path.)

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLX’ (Excel 97-2004) files.

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLSX’ (Excel 2007+) files.

gdata: Run the function ‘installXLSXsupport()’

gdata: to automatically download and install the perl

gdata: libaries needed to support Excel XLS and XLSX formats.

Attaching package: ‘gdata’

The following object is masked from ‘package:stats’:

nobs

The following object is masked from ‘package:utils’:

object.size

The following object is masked from ‘package:base’:

startsWith

Error: unexpected input in “write.table(trim(dataset), file=””

Execution halted

—> Microsoft.PowerBI.Radio.RScriptRuntimeException: R script error.

Loading required package: gdata

gdata: Unable to locate valid perl interpreter

gdata:

gdata: read.xls() will be unable to read Excel XLS and XLSX files

gdata: unless the ‘perl=’ argument is used to specify the location of a

gdata: valid perl intrpreter.

gdata:

gdata: (To avoid display of this message in the future, please ensure

gdata: perl is installed and available on the executable search path.)

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLX’ (Excel 97-2004) files.

gdata: Unable to load perl libaries needed by read.xls()

gdata: to support ‘XLSX’ (Excel 2007+) files.

gdata: Run the function ‘installXLSXsupport()’

gdata: to automatically download and install the perl

gdata: libaries needed to support Excel XLS and XLSX formats.

Attaching package: ‘gdata’

The following object is masked from ‘package:stats’:

nobs

The following object is masked from ‘package:utils’:

object.size

The following object is masked from ‘package:base’:

startsWith

Error: unexpected input in “write.table(trim(dataset), file=””

Execution halted

at Microsoft.PowerBI.Radio.RScriptWrapper.RunScript(String originalScript, Int32 timeoutMs)

at Microsoft.PowerBI.Client.Windows.R.RScriptHandler.GenerateVisual(ScriptHandlerOptions options)

— End of inner exception stack trace —

at Microsoft.PowerBI.Client.Windows.R.RScriptHandler.GenerateVisual(ScriptHandlerOptions options)

at Microsoft.PowerBI.ExploreServiceCommon.ScriptVisualCommandFlow.RunInternal(Stream dataShapeResultStream, QueryBindingDescriptor& bindingDescriptor)

at Microsoft.PowerBI.ExploreServiceCommon.ScriptVisualCommandFlow.Run(Stream dataShapeResultStream, QueryBindingDescriptor& bindingDescriptor)

at Microsoft.PowerBI.ExploreHost.SemanticQuery.ExecuteSemanticQueryFlow.TransformDataShapeResult(QueryCommand transformCommand, String dataShapeId, SemanticQueryDataShapeCommand command, Stream dataShapeResultStream, QueryBindingDescriptor& bindingDescriptor)

at Microsoft.PowerBI.ExploreHost.SemanticQuery.ExecuteSemanticQueryFlow.ProcessAndWriteDataQuery(IQueryResultDataWriter queryResultDataWriter, DataShapeGenerationContext dsqGenContext, EntityDataModel model, DataQuery query, ServiceErrorStatusCode& serviceErrorStatusCode)

at Microsoft.PowerBI.ExploreHost.SemanticQuery.ExecuteSemanticQueryFlow.ProcessAndWriteSemanticQueryCommands(IQueryResultsWriter queryResultsWriter, ExecuteSemanticQueryRequest request, IConceptualSchema conceptualSchema, EntityDataModel model)

DPI Scale:

100%

Supported Services:

Power BI

Formulas:

There seems to be a problem with your R-script or R-installation. Did it run successfully in R-Studio?

I read your post with great interest as I am trying to link a Google spreadsheet to Excel. I am able to open the link in Excel via the Web. However, I have been trying to create a power query which would enable me to edit the data in Excel without overwriting the data when the sheet is refreshed with new rows. I tried to follow your instructions but have not succeeded. What I have done is open the sheet via the Web. Then in following your instructions I just don’t know whether the original table or appended query should be loaded or not. And which table should actually have the additional column DateTime to remove duplicates. i sincerely hope you’ll have patience with me – I am a bit desperate after two days of trying all kinds of possibilities. Thank you in advance.

Hi Alice,

sorry to hear that it doesn’t work for you. Please check this video: https://www.youtube.com/watch?v=xDVUUcbX13g

Cheers, Imke

I was not able to use the R code which you posted, I received an error which told me that something was wrong with my installation of gdata. What am I doing wrong here?

Hi Christopher,

you can find another version of the R-export that doesn’t require the gdata-package here: https://github.com/ImkeF/M/blob/master/LibraryR/Table.ExportToCsv.pq

Pingback: Incremental Refresh For Cloud Data Sources in Power BI Service (Pro) | Excel Inside

I am trying to use the code above, but I am getting an error due to the ‘;’ character which prevents me from using the column.names=FALSE command. I suspect this is why, when I eliminate said command, my CSV file merges the columns as well. Any help would be much appreciated.

Oh, that’s an error: Have replaced the “;” by “,”

Hi Admin,

This is the functionality I’m looking for.But it’s difficult to create the same on myvown.Im trying to create similar load log but looking not able to follow all the steps you mentioned.Esspecially where to created the R_script and append queries Can you please create a detailed video or document.That will help

Hi Anil,

yes, that’s not the easiest task. You can find a little video here: https://www.youtube.com/watch?v=xDVUUcbX13g&t=1s

Please also check out the other articles that I’ve referenced at the end of the blogpost.

/Imke

Hi Admin,

Is it possible to do it in PowerBi using Power Query instead of using R or Python. Am getting cyclic reference error in PowerQuery for PowerBI.